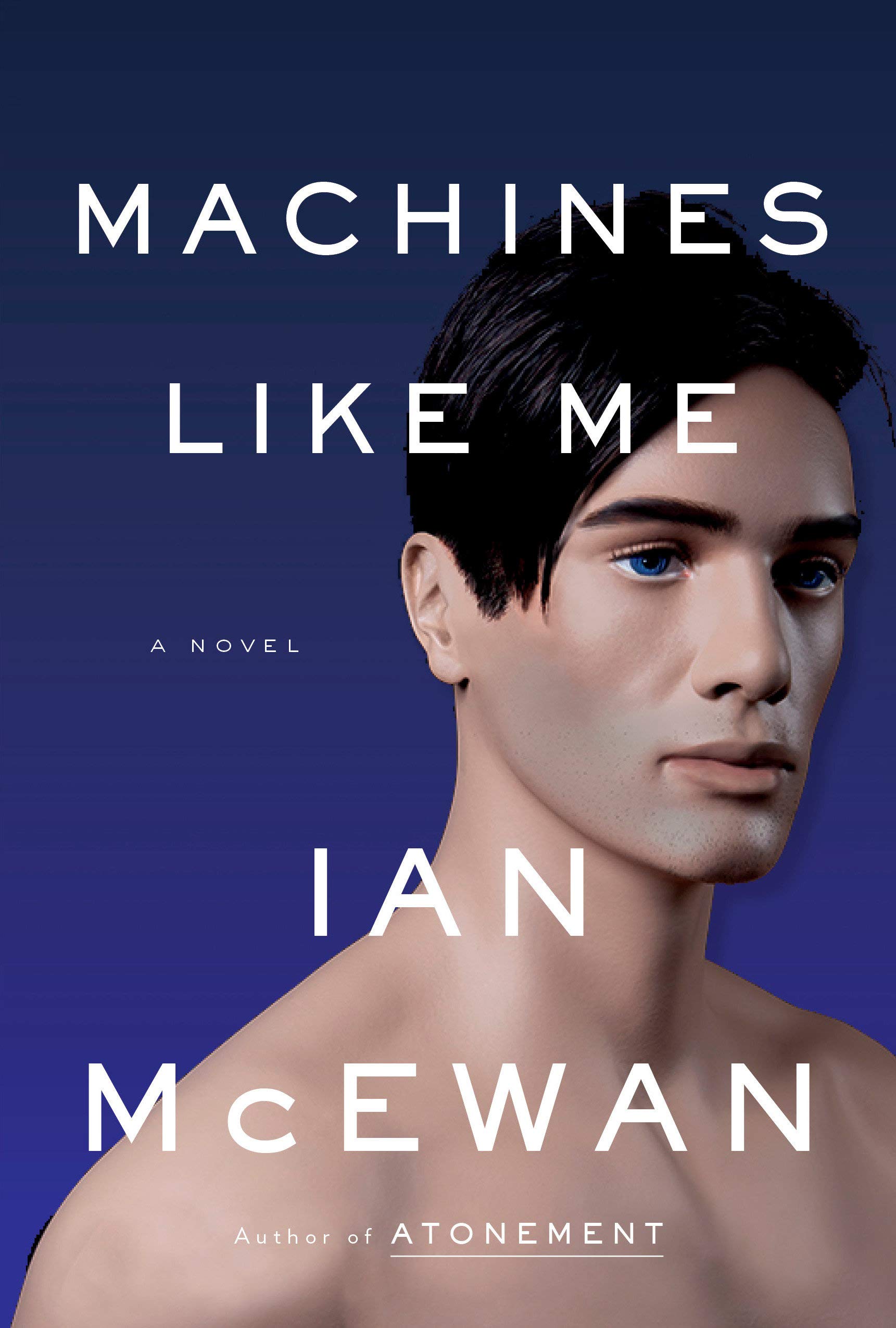

Ian McEwan (award-winning author of such classics as Enduring Love and Atonement) has turned his hand to a sci-fi novel about A.I. in his new book Machines Like Me.

I haven't read it yet, but from interviews, it seems that unlike many writers, McEwan realises that A.I. isn’t something in the future, it’s with us all right now, and it already has dramatic real-world effects. In a Channel 4 News interview, referring to the tragedy of the recent Ethiopian Airlines crash, McEwan called the plane’s control system “a giant brain that decides the aeroplane is stalling,” and further commented:

“the brain thinks that it’s stalling, a child looking out the window can tell you it’s not.”

Whether a child could have told the plane was in trouble or not, the crash (along with an earlier and similar crash of a Lion Air 737 Max), and the subsequent grounding of all of Boeing’s 737 Max planes, is an illustration of how increasingly intelligent software is making critical decisions in human lives. While investigations into what caused the 737 Max crashes are ongoing, the situation certainly highlights the complex interdependencies that arise at the intersections of human and computer decision making, not just in the cockpit, but throughout the development of complex systems like aircraft.

Role of flight in human life has changed dramatically, and there is an ever-evolving market for air travel and aircraft. Consider that after WWII, Boeing numbered its products with 300s and 400s representing conventional prop planes, 500s turbine engines, 600s rockets, and 700s jet aeroplanes. While Boeing’s work on most of those product lines is less well known, it was the Boeing marketing department thought to start and end with seven sounded better as a brand, and so the first Boeing jet, created in 1958, was called 707. Thus, the 737 is only the third jet aircraft that Boeing built, starting in 1964, 55 years ago.

Of course, the airline industry has changed massively between then and the creation of the first flight of 737 Max in 2016, for many reasons. First is that the cost of air travel has dropped dramatically (along with massive increases in the numbers of flights, and a corresponding drop in airline profit margins). A second impactful change is that the price of fuel has skyrocketed (along with concerns about the environmental impacts of its consumption). Finally, the competitive landscape for jet aircraft manufacturer has radically transformed, from a large number of international companies making jumbo passenger jets to only two: Boeing and Airbus.

In 2006, Boeing was considering replacing the ageing design of the commercially lucrative 737 with a “clean sheet” design, following along from the high-tech developments that are in its 787 Dreamliner, which first rolled off the assembly line in 2005. However, in 2010, Airbus, Boeing’s only remaining competitor, launched the A320neo series, with a new design that utilised high thrust engines with greater fuel efficiency to better fit into the modern travel market. Boeing was keen not to lose business to this new aircraft, so it decided to move quickly, abandon an entirely redesigned 737, and simply modify the existing 737 design to have new engines. To accommodate this change, the engines were moved upward and forward on the plane. The combined effect of newer, higher-thrust engines on 737 provided the expectation that Boeing’s plane would be 4 per cent more fuel efficient than the competing Airbus product.

However, as is always the case with the highly interdependent systems of aircraft, the re-engined design introduced a new problem: on its own, 737 Max no longer had positive static aerodynamic stability. That’s a fancy way of saying that under disturbances, the plane didn’t always return to a nice, level flight attitude. It isn’t uncommon for complicated planes to have this sort of instability.

Some say that the reason the Wright Brothers were the first men to fly was that they were bicycle mechanics, and they understood that a human pilot, through intelligent and continuous control actions, can make an unstable system stable. After all, a bike without a rider always falls over, but one with a rider can resist all manner of bumps in the road. Thus, the Wright’s didn’t attempt to create a plane that was stable without a pilot, and this is one of the reasons their designs succeeded where so many others had failed, creating the era of manned flight.

The particular instability of 737 Max was directly dependent on its redesign. Because of higher thrust further forward and higher up, 737 Max wanted to pitch its nose up, which created a danger of the plane stalling. This is where the “A.I.” comes in. Where the Wright Brother’s planes were made stable by the pilot, Boeing fixed the 737 Max’s nosing up by installing a new kind of flight control software. MCAS (The Manoeuvring Characteristics Augmentation System) was created to sense when the plane was pitching up, and autonomously act to pull the nose back down. Thus, new interdependencies were created, between autonomous decision-making software (a simple form of A.I.) and fundamental aerodynamic stability of the aircraft.

The web of interdependencies goes further than that. MCASrelied on airspeed, and a single angle-of-attack sensor to decide whether tonose the aircraft downward. It remains unclear what role that (possibly faulty)sensor and MCAS played in the crashes of Lion Air Flight 610 and EthiopianAirlines Flight 302 soon after take-off, but MCAS was intended to operate without the pilots being aware of its action to provide positive aerodynamic stability to 737 Max. Boeing explicitly stated that "a pilot should never see the operation of MCAS" in normal flying conditions.MCAS was not described in flight crew operations manual (FCOM), and it has beenreported that Boeing was avoiding “inundating and average pilot with too muchinformation” from additional systems like MCAS. It is possible that the pilotsof the two crashed planes were insufficiently informed regarding the system,how it might fail, and what it was doing during their fatal crashes. It isreported that the EthiopianAir crew followed appropriate procedures, and turned MCAS off, but afterbeing unable to regain control, they turned the system on again, and it put theplane into a dive from which it could not recover.

It will be some time before we understand what happened inthe two 737 Max crashes. However, what is clear is that the plane existed in asystem of interdependencies. Markets affected plane design, plane design affectedaerodynamics, aerodynamics were affected by increasingly autonomous software,and these interdependencies, in turn, could have affected the human system oftraining and emergency reaction when things went catastrophically wrong.

Regardless of his fictional perspective on A.I., McEwan isright that 737 Max is a tragic encounter with autonomous decision software, which can be correctly called A.I. This is why many journalists are talking about the implications of the plane’s troubles for new technologies like self-driving cars, which will inevitably link the commercial, the mechanical, and the human together in complex and vital relationships.

I sincerely believe that increasing interdependency between human systems (from markets to pilots executing emergency procedures) and algorithmic systems (from flight controls to more general purpose A.I.) are only going to have increasingly vital effects on people. It’s one of the things I’m trying to reveal in Rage Inside the Machine, it’s one of the reasons that my company BOXARR is so focused on helping people understand these complex interdependencies. In case anyone wondered, this is how I think these two activities of mine are linked. Because regardless of the promise of A.I., human insight will always be required to ensure that people are made safer and happier in the world of the future.